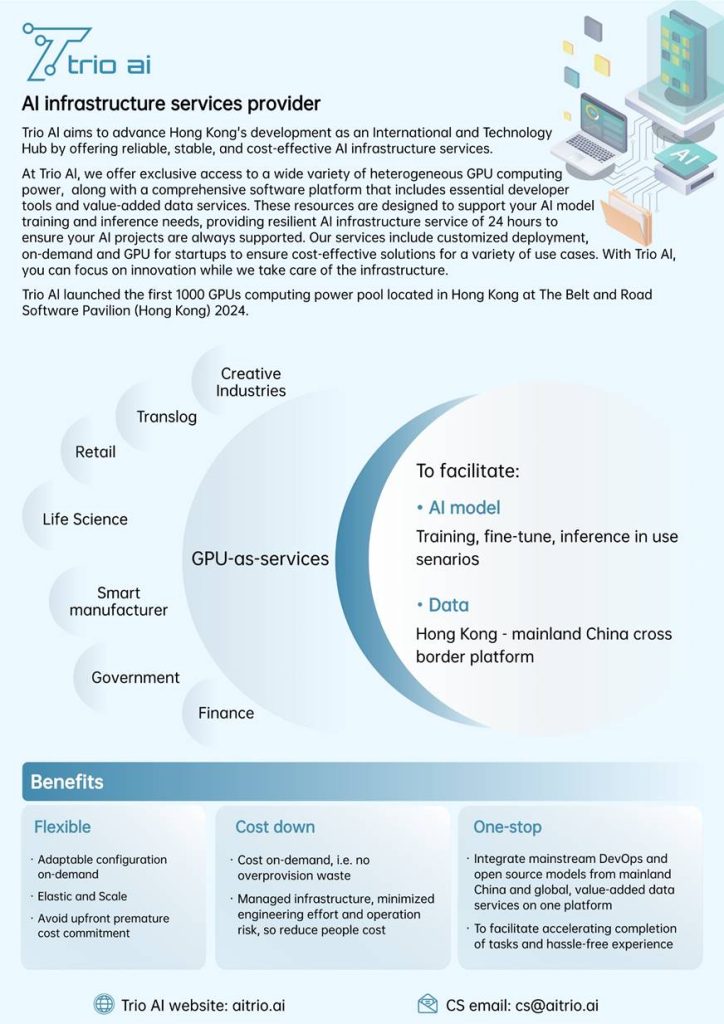

Trio AI GPU-as-a-Service (GaaS) is a managed infrastructure services provider with HKD232 million capital investment in a thousand MetaX GPU cards in Hong Kong. By partnering with Trio AI, UDS Data Systems Limited enables organizations to accelerate AI adoption without the long procurement cycles, fragmented toolchains, or compliance uncertainty normally associated with large-scale AI infrastructure builds.

With Trio AI GaaS, enterprises, research institutions, and solution builders gain secure, elastic access to a thousand‑card MetaX GPU cluster, a large choices of open source large language models(LLMs) and orcheststration software, data value‑added services, and a powerful chip scheduling & management platform—backed by expert integration, cost governance, and lifecycle support from UDS.

Core Platform Advantages

Heterogeneous GPU Resource Pool

- Leverage both Trio AI Thousand‑card MetaX cluster host in Hong Kong , and heterogeneous GPU resource pool in China

- Flexible matching of workloads (training vs. inference vs. fine‑tuning) to the most cost‑effective GPU type.

- Abstracted hardware layer reduces vendor lock‑in and supply chain risk.

Full-Stack Model Enablement

- Pre-installed catalog of 20+ open-source / commercial LLMs (including DeepSeek V3 / R1, Llama variants).

- One-click environment provisioning for fine‑tuning, distillation, and evaluation.

- Distillation workflows to reduce inference cost (lighter student models).

Dedicated Chip & Scheduling Management Platform

- Intelligent workload placement, GPU queueing, and multi-tenant isolation.

- Utilization, throughput, and cost dashboards enabling FinOps oversight.

- API / UI control for developers, MLOps engineers, and data scientists.

Enterprise Readiness & Governance

Security & Isolation

- Trio AI provides a dedicated, managed AI infrastructure for a single client within a secure, third-party data center. This ensures your data and workloads are completely isolated from other customers.

- Role-based access controls and audit logging for model, dataset, and secret operations.

- Support for private networking / controlled ingress & egress patterns.

Compliance Alignment

- Designed to assist with Hong Kong data privacy and industry governance expectations.

- Data residency options and cross-border transfer assessment support.

- Traceability of model lineage (base → fine‑tune → distilled variant).

Operational Reliability

- High-availability cluster architecture with monitoring of GPU health, thermals, and utilization.

- Proactive capacity planning to avoid starved training windows.

- Performance baselining for repeatable experiments (tokens/sec, latency P95, throughput/$.).

Observability & Metrics

- Real-time dashboards: GPU allocation, queue depth, job success/failure, cost per training run.

- Hooks for integration with existing SIEM / log analytics platforms.

- Model performance evaluation pipelines (quality, drift, hallucination profiling).

Data Center Certifications

- ISO/IEC 27001:2013

- ISO 45001:2018

- ISO 9001:2015

- ISO 14001:2015

- ISO 50001:2018

Lifecycle Services via UDS

Assessment & Architecture

- Workload profiling (model size, sequence length, concurrency, data volume).

- Infrastructure blueprint: selection of GPU class, storage tier (object / parallel FS), and network topology.

MLOps Integration

- CI/CD pipelines for model artifact promotion (dev → staging → production).

- Automated fine‑tuning + evaluation loops with reproducibility safeguards (environment pinning, seed control).

Data Engineering & RAG Enablement

- Secure ingestion connectors (databases, file shares, documents).

- Embedding generation + vector index build + latency tuning.

- Policy filters for sensitive field masking.

Cost & Performance Optimization

- Right‑sizing GPU memory footprints (mixed precision, quantization, activation checkpointing).

- Distillation strategy to achieve target latency and token cost per output.

- Recommendation reports: reserved vs. on‑demand mix, consolidation opportunities.

Support & Managed Operations

- 24×7 incident response SLAs.

- Quarterly utilization & efficiency review (KPIs: GPU occupancy %, job wait time, cost per training epoch).

- Continuous patching and security posture updates.

Key Feature Highlights (At a Glance)

- Elastic GPU-as-a-Service: Rapid provisioning—scale from single GPU sandbox to thousand‑card clusters.

- On-Prem / Hybrid Option: Private deployment footprints for regulated sectors.

- Pre-Loaded Model Library: Accelerate experimentation; skip manual environment setup.

- Model Distillation & Optimization: Reduce inference spend while preserving accuracy.

- Data Tooling: Vector databases + large text corpus access for advanced LLM workflows.

- Scheduling & Orchestration: Fair-share and priority queueing; hardware heterogeneity abstraction.

- Cross-Border Computation: Distributed training and inference bridging Hong Kong, Mainland, and SE Asia.

- Compliance & Audit: Logging, access policy enforcement, model lineage tracking.

- FinOps Dashboard: Cost transparency, allocation vs. utilization variance analysis.

- Industry Solution Blueprints: Finance document intelligence, manufacturing visual inspection, logistics forecasting, research experimentation.

Example Use Cases

- Financial Document Intelligence

Fine-tune a bilingual LLM for KYC analysis; deploy a distilled inference model with lower latency and controlled hallucination metrics. - Industrial Visual Inspection

Train computer vision or multimodal models on GPU cluster; push optimized inference container to factory edge nodes. - Logistics Demand & Route Forecasting

Accelerate time-series model training; orchestrate periodic re-training bursts during seasonal peaks. - Research & Academia

Provide controlled multi-user workspaces with quota management and reproducible environment snapshots.

Typical Deployment Path

Phase 1: Discovery & KPI Definition (Latency, cost/token, throughput)

Phase 2: Pilot Environment Provisioning (Sandbox + baseline model runs)

Phase 3: Fine-Tuning / Distillation & Evaluation (Quality, cost, performance)

Phase 4: Production Rollout (Optimized capacity reservation, monitoring hooks)

Phase 5: Continuous Optimization (Model refresh, cost governance, new workloads onboarding)

Performance & Scalability (Illustrative)

(Note: Detailed performance parameters—such as tokens/sec for specific model sizes, GPU memory classes, or network bandwidth—can be appended once final benchmarking results from your selected workload set are available. We can integrate vendor-provided figures or conduct empirical baselines during the pilot.)

Example Metrics to Publish (After Validation):

- Training Throughput (tokens/sec) for DeepSeek variant on MetaX vs. alternative GPU.

- Inference Latency P95 (ms) under concurrency load.

- Distillation Inference Cost Reduction (%).

- GPU Utilization Gain via Scheduler vs. static allocation.

Provide raw benchmark artifacts (config hashes, software versions) to bolster credibility.

Differentiators vs. Generic Cloud GPU Rental

- Local Hong Kong hosting meet compliance requirements

- Private service deployment to reduce data Heterogeneous GPU abstraction (domestic + mainstream) lowers geopolitical supply risk.

- Heterogeneous GPU abstraction (domestic + mainstream) lowers geopolitical supply risk.

- Integrated model catalog + distillation pipeline accelerates time-to-value.

- FinOps + MLOps + Data governance under one operational framework.

- UDS enterprise integration (identity, observability, policy) reduces internal engineering overhead.

How to Get Started

- Book a Technical Workshop (Discovery + ROI framing)

- Launch a 2–4 Week Pilot (Baseline model training / fine-tune / distillation)

- Receive Optimization Report (Capacity mix, cost forecast, governance plan)

- Transition to Production (SLA activation, monitoring integration, periodic reviews)

For more information about Trio AI GPU-as-a-Service and how it can benefit your organization, please contact us at phone +852 2851 0271 or Email [email protected]